Following 's process to create 2d game assets, I began playing around with DreamBooth. And I'm surprised by how good the results are!

First I trained it with a small set of 6 pixelated wizards, like these:

Pinned Tweet

Show this thread

AI -

AI -  Games -

Games -  Security -

Security -  Compilers

Compilers

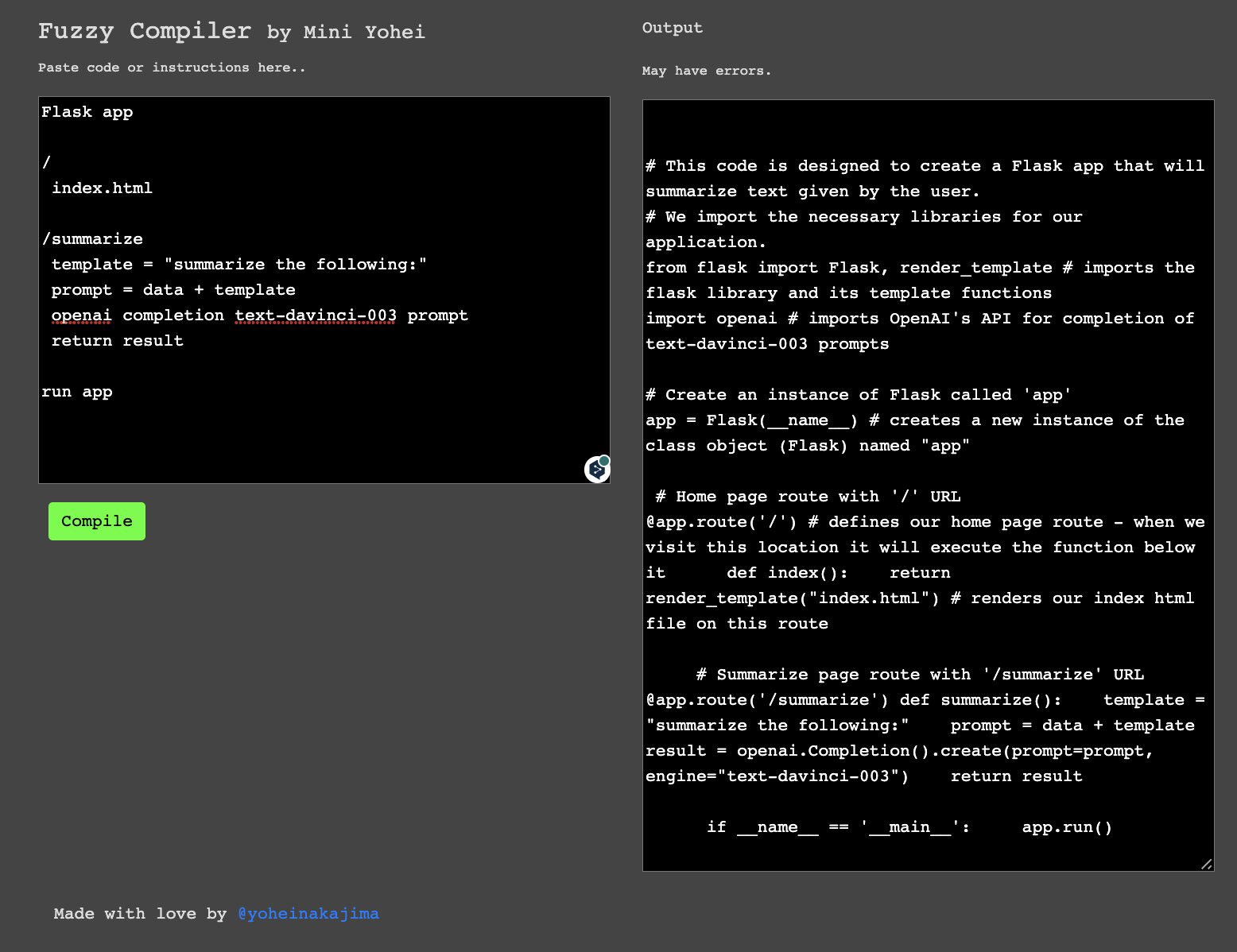

Just make up your own code, no worrying about syntax. Translates to working code w comments.

See example 1:

Just make up your own code, no worrying about syntax. Translates to working code w comments.

See example 1:

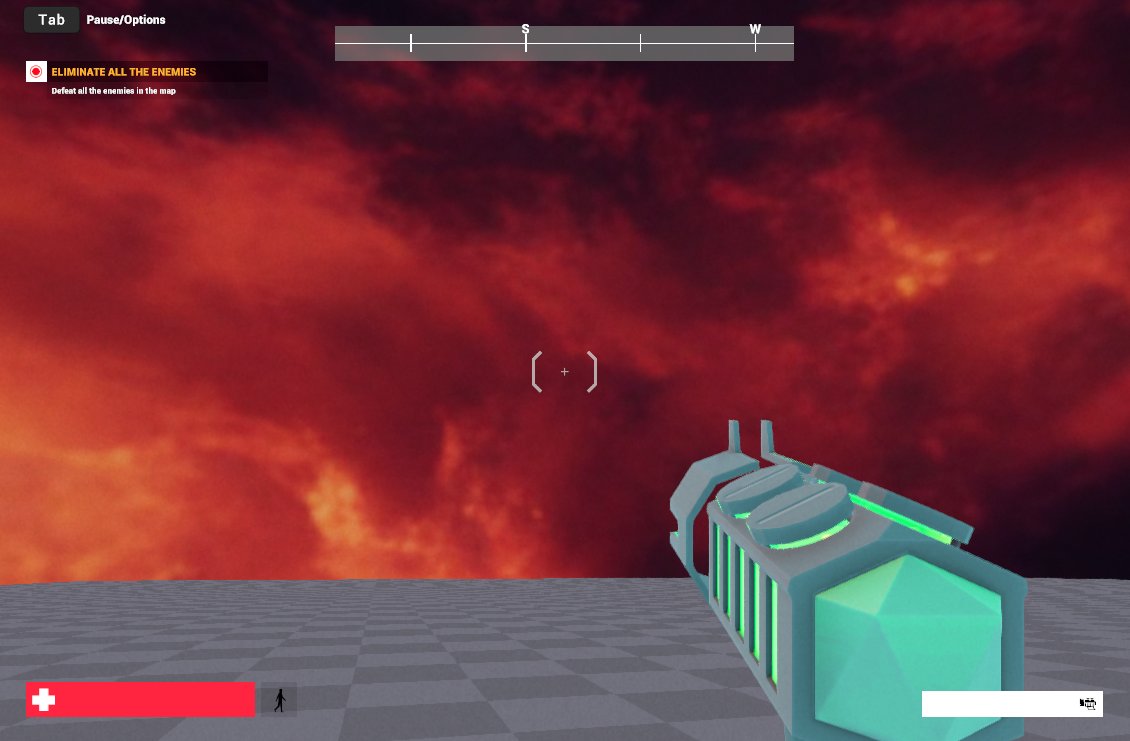

Follow the exploration below, esp. if you're in the #gaming industry (Game dev, Game Artist, Creative Director, etc.) Content production is about to be transformed

Follow the exploration below, esp. if you're in the #gaming industry (Game dev, Game Artist, Creative Director, etc.) Content production is about to be transformed

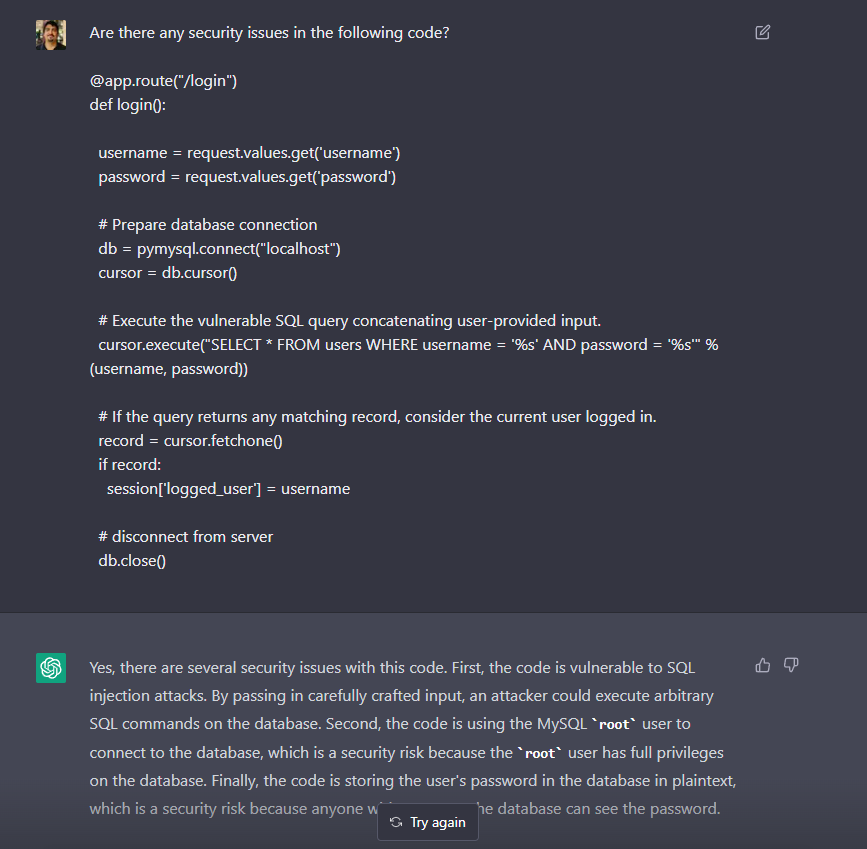

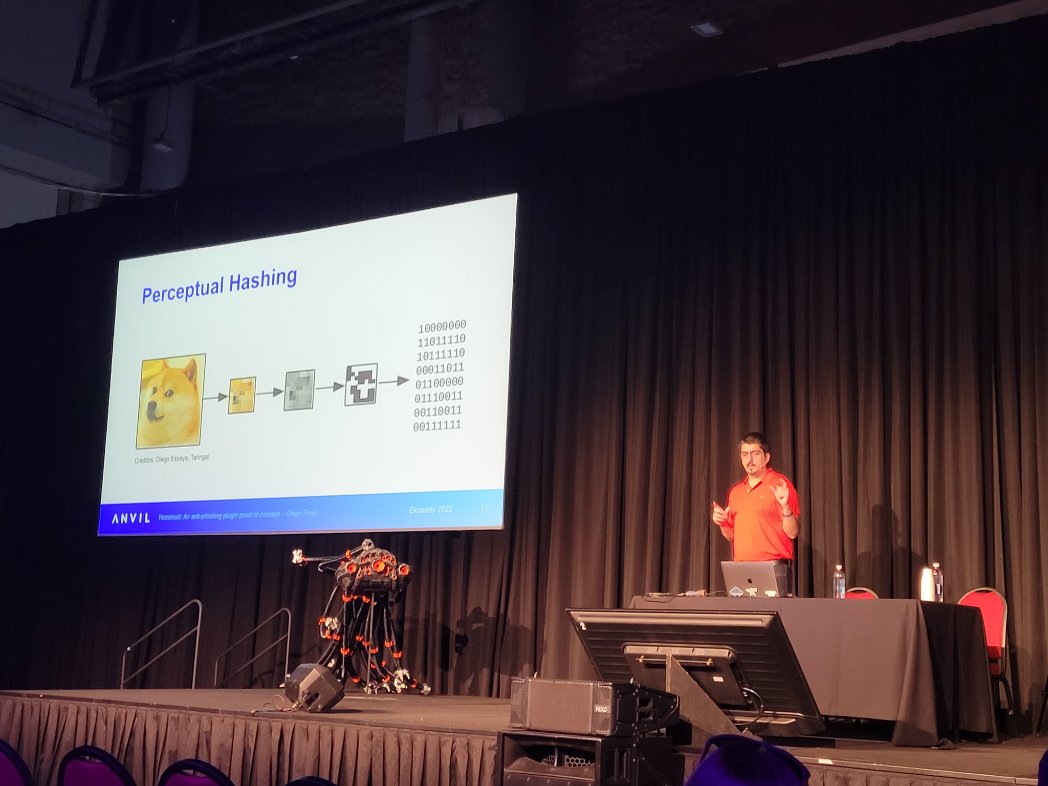

@DiegoFreijo, Senior Security Engineer & Manager at @anvil_secure

@DiegoFreijo, Senior Security Engineer & Manager at @anvil_secure

Hominoid: An anti-phishing plugin proof of concept

Save your seat

Hominoid: An anti-phishing plugin proof of concept

Save your seat  ekoparty.org/r/k5j

ekoparty.org/r/k5j

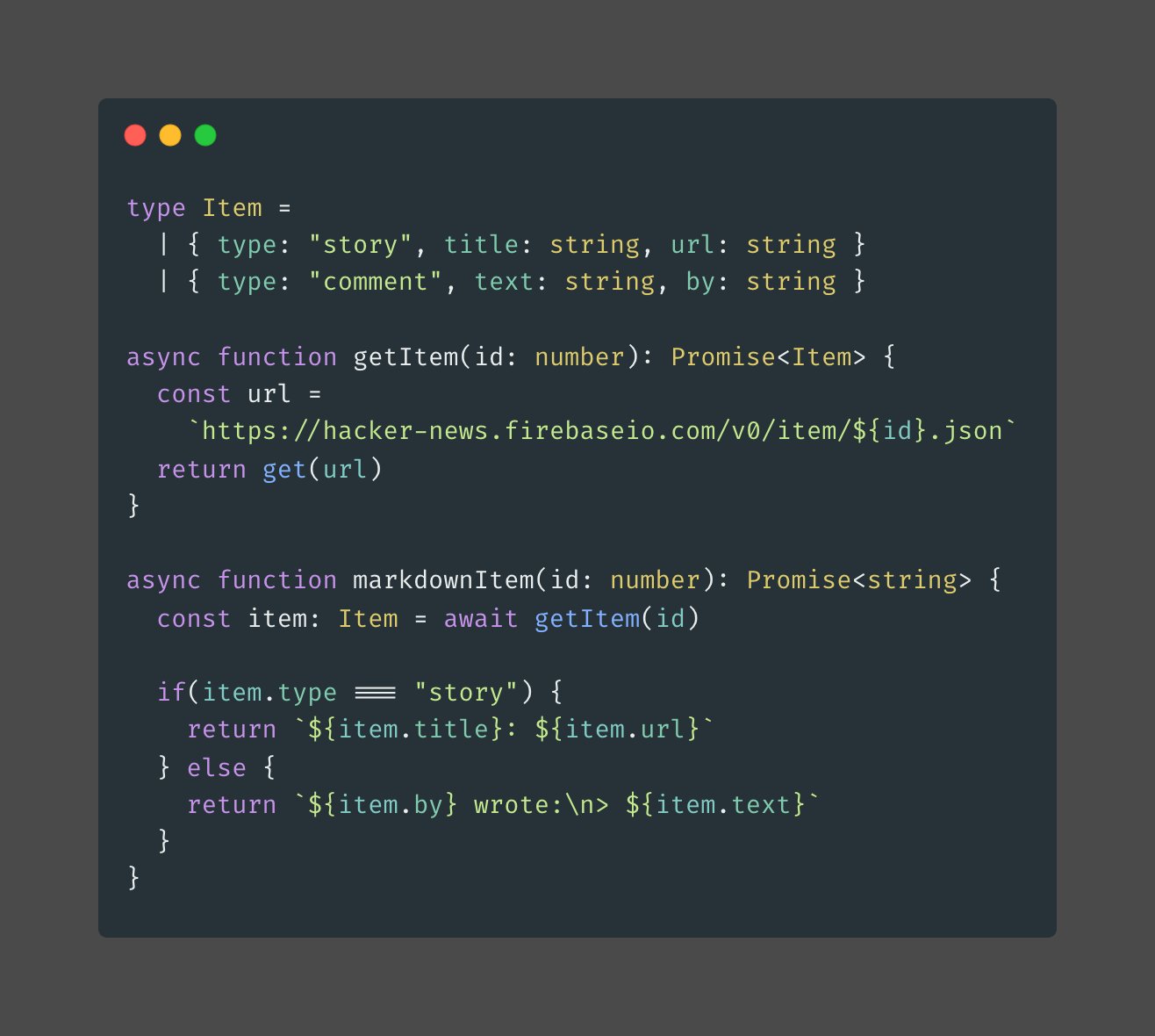

Union Types

UT are great at solving families of runtime errors. They help us define the exact shape of the data we're working with.

Example below!

Union Types

UT are great at solving families of runtime errors. They help us define the exact shape of the data we're working with.

Example below!